一直以为级联架构未公开,全篇转自社区WIKI:https://wiki.openstack.org/wiki/OpenStack_cascading_solution

Splitted into two open source projects

The cascading layer is splitted and decoupled into “Tricircle” project (https://wiki.openstack.org/wiki/Tricircle) and “Trio2o” project (https://wiki.openstack.org/wiki/Trio2o).

OpenStack cascading solution is the version 1 of these two open source projects, and has already been commercially delivered and running in many public, hybrid and private clouds.

Overview

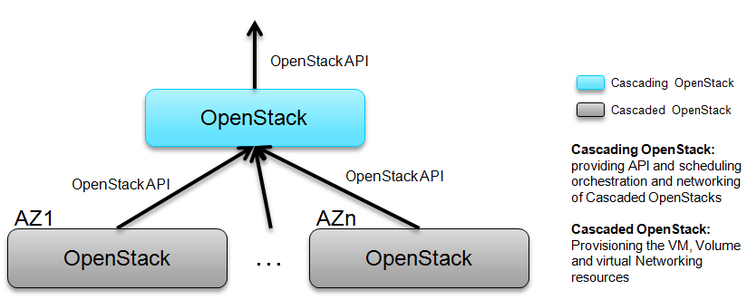

OpenStack cascading solution is designed for multi-site OpenStack clouds integration, and solve the scalability of OpenStack by the way, for example, a cloud with million level VMs geographically distributed in many data centers.

- The parent OpenStack expose standard OpenStack API

- The parent OpenStack manage many child OpenStacks by using standard OpenStack API

- Each child OpenStack functions as a Amazon like available zone and is hidden by the parent OpenStack

- Cascading OpenStack: the parent OpenStack, providing API and scheduling and orchestration of Cascaded OpenStacks

- Cascaded OpenStack: the child OpenStack, provisioning the VM, Volume and virtual Networking resources

The cascading OpenStack is to function as virtual resources ( VM, Volume, Network.. ) addressing and routing to corresponding cascaded OpenStack where the virtual resources are allocated and interconnected. The cascading OpenStack becomes only one control layer, no data plane involved.

OpenStack cascading is “OpenStack orchestrate OpenStacks”. OpenStack cascading mainly concentrate on API aggregation, and provide tenant level cross OpenStack IP address management, networking automation, image replication/registration, etc. And also provide tenant with a virtual OpenStack experience although his resources distributed in multiple back-end OpenStack instances. After cascading, tenant only need to access one API endpoint. For tenants, it’s like a virtual single region, and multiple child OpenStacks are not visible which had already been integrated and hidden by the cascading OpenStack. The tenant can see multiple “available zones”, where the resources distributed into, and each child OpenStack exactly work internally as Amazon like availability zone.

Use Case

- Large Scale Cloud to work like One OpenStack Instance :

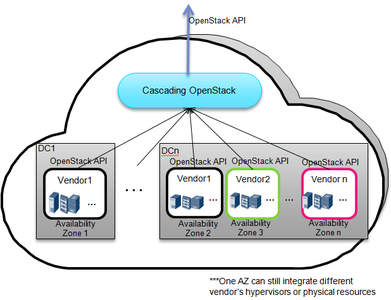

The cloud admin wants to provide one cloud to tenants with one OpenStack api, and want the cloud to work as one OpenStack instance. The cloud is distributed in multiple data centers or in a single very large scale data center (for example 100k nodes). The cloud will grow with capacity expansion gradually, to avoid vendor-lock in, multiple vendors’ OpenStack distribution to build the cloud together is also required.

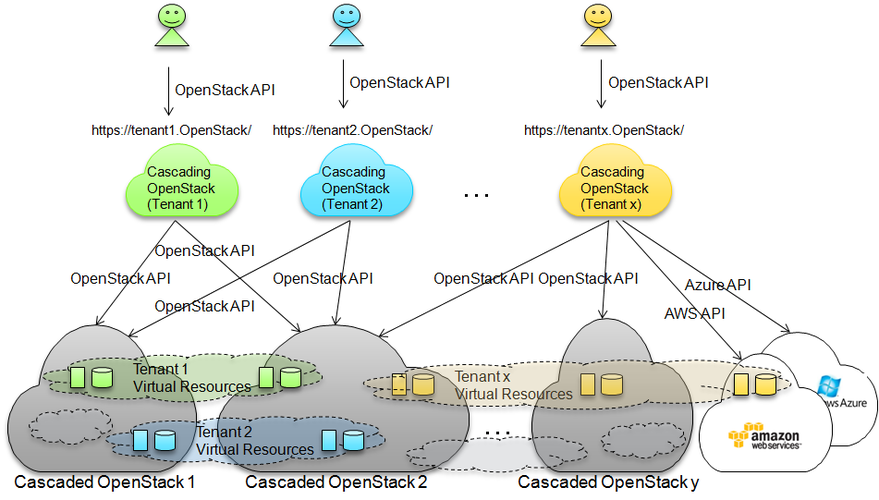

- Tenant level virtual OpenStack service over hybrid or federated or multiple OpenStack based clouds:

( Video for OpenStack cascading based hybrid cloud in OpenStack Tokyo Summit: https://www.youtube.com/watch?v=XQN6jBg442o )

For the large scale cloud provider, when the tenant access his cloud resources, will be redirected to the portal/API endpoint of the cascading OpenStack allocated/assigned for him, just like one virtual OpenStack, which integrates his resources in multi-back-end clouds, to serve for him. Through the cascading OpenStack, the tenant has global view for his resources like global quotas, images, virtual machines, interconnected networks, volumes, metering data…..

These clouds behind the cascading OpenStack may be federated with KeyStone, or using shared KeyStone, or even some OpenStack clouds built in AWS or Azure, or VMWare vSphere. As the driver for AWS/Azure developed for cascading, even hybrid-cloud can be integrated behind the cascading OpenStack. The cloud can be consisted of unlimited OpenStack instances in the pool (or even hybrid resources).

Under this deployment scenario, unlimited scalability in a cloud can be achieved but still provide tenant with a consistent global view. No unified cascading layer, tenant level resources orchestration among multi-OpenStack clouds fully distributed(even geographically), no central point at all. The database and load for one casacding OpenStack is very very small, easy for disaster recovery or backup. Multiple tenant may share one cascading OpenStack to reduce resource waste, but the principle is to keep the cascading OpenStack as thin as possible.

Motivation

The requirement and driving forces for multi-site clouds integration is cross DC / OpenStack resources orchestration: globally addressable tenants which result in global services. tenant virtual resources will be distributed in multi-site but connected by L2/L3 networking. Please refer to issues of OpenStack Multi-Region Mode

- Ecosystem friendly open API for the unified multi-site resource orchestration

Ecosystem friendly open API : It takes almost 4 years for OpenStack to grow the eco-system, the OpenStack API must be retained for distributed but unified multi-site resource orchestration.

- Multi-site cloud has requirement for multi-vendor OpenStack distribution, multi- OpenStack instance, multi- OpenStack version co-existence

Multi-vendor: anti-vendor lock in business policy. Multi-instance: each vendor has his own OpenStack solution distribution, different OpenStack instance for different site Multi-version: step-wise cloud construction, upgrade gradually

- Restful open API /CLI for each site

OpenStack API in each site: Open, de facto standard API make the cloud always workable and manageable standalone in each site each site installation/upgrade/maintenance independently by different vendor or cloud admin

Refer to slides Building Multi-Site and Multi-OpenStack Cloud with OpenStack cascading for more background.

From technologies point of view, to build large scale distributed OpenStack based cloud, for example, the multi-site cloud includes 1 million VMs or 100k hosts, there are big challenges

Naturally, there are two ways to do that:

1. scale up a single monolithic OpenStack region, but

- It’s a big challenge for a single OpenStack to manage scale for example 1 million VMs or 100K hosts.

- Can not obtain real fault isolation area like EC2’s available zone, all of the cloud are tighten up into one OpenStack because of shareing RPC message bus and database.

- Single huge monolithic system bring high risk with O&M & trouble shooting, and big challenge for even the most skilled Op team to handle SW rolling upgrade and configuration changes, etc.

- Time consumption for heterogeneous vendor’s infrastructure integration, multi-vendor’s infrastructure co-existence is high demand for large scale cloud

2. setup hundreds of OpenStack Regions with discrete API endpoint, but

- Have to buy or develop his own cloud management platform to integrate the discrete cloud into one cloud, and also, OpenStack API ecosystem is lost.

- Or, leave the cloud with splitted resource island without any association…

Inspiration

It’s not reinventing the wheel. The OpenStack cascading solution is inspired from:

- remote clustered hypvisors, like vCenter, Ironic running under Nova. This makes Nova can scale to larger scale.

- plugable driver/agent architecture of Nova/Cinder/Neutron

- the magic FRACTAL, which has character of recursive self-similar and growth to scale. Please refer to OpenStack cascading and fractal for more information.

From the inspiration, here comes the conclusion:

- Handling cascaded Nova/Cinder similar as what has been done over vCenter and Ironic

- Handling cascaded Neutron similar as the L2 OVS agent / L3 DVR agent. The challenge is to make cross cascaded OpenStack L2/L3 networking like that inside one OpenStack

Now, the cascaded OpenStack simply like a huge compute node, and the cascading OpenStack like the controller node.

PoC Architecture

All information described here is used during the PoC only.

For detailed PoC architecture design, please refer to following links.

- Slide Share: Introduction of OpenStack cascading solution (last edited date: Apr. 27, 2015)

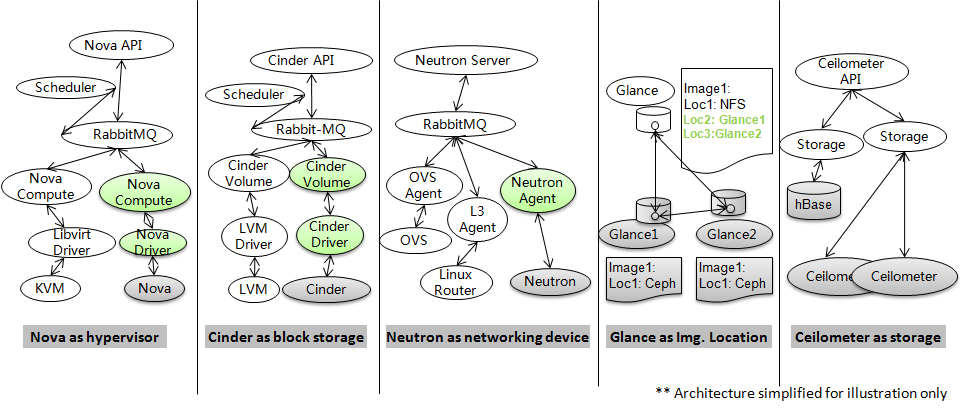

Normally, Nova will use KVM or other hypervisor as the compute virtualization backend, Cinder will use LVM or other block storage as storage backend, Neutron will use OVS or other as L2 backend, linux router as L3 agent, etc.

The core architecture idea of OpenStack cascading is to add Nova as the hypervisor backend of Nova, Cinder as the block storage backend of Cinder, Neutron as the backend of Neutron, Glance as one image location of Glance, Ceilometer as the store of Ceilometer.

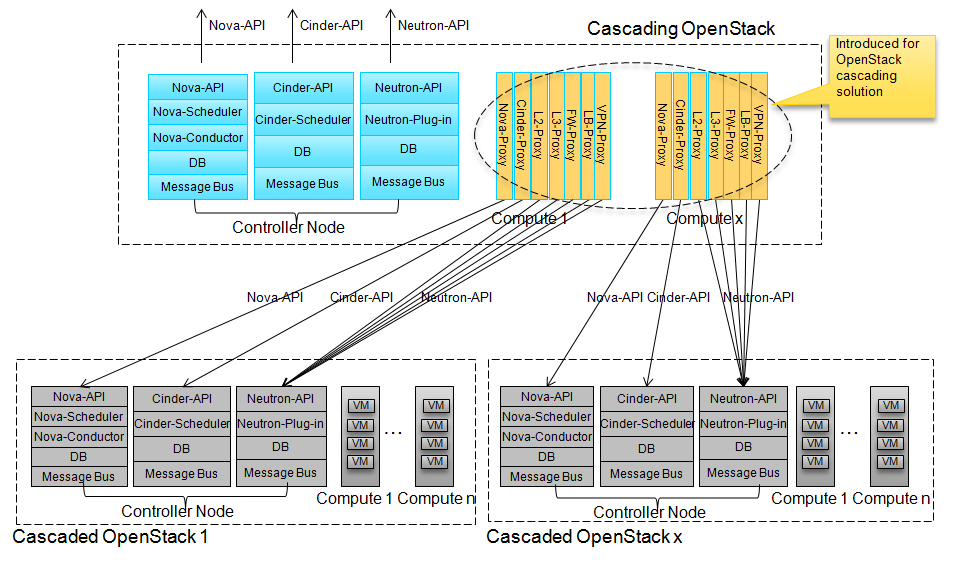

The OpenStack cascading includes cascading of Nova, Cinder, Neutron, Glance and Ceilometer. KeyStone will be global service shared by cascading and cascaded OpenStacks (or using KeyStone federation), and Heat will consume cascading OpenStack API. Therefore no cascading is required for KeyStone and Heat. The following picture is the architecture for Nova/Cinder/Neutron cascading.

- Nova-Proxy: the hypervisor driver for Nova running on Nova-Compute node. Nova proxy makes the cascaded Nova being the hypervisor back end of Nova. Transfer the VM operation to the regarding cascaded Nova. Also responsible for attach volume and network to the VM in the cascaded OpenStack.

- Cinder-Proxy: The Cinder-Volume driver for Cinder running on Cinder-Volume node. Cinder proxy makes Cinder being the block storage back end of Cinder. Transfer the volume operation to the regarding cascaded Cinder.

- L2-Proxy: Similar role like OVS-Agent. L2-Proxy makes the cascaded Neutron as the L2 backend of Neutron. Finish L2-networking in the cascaded OpenStack, including cross OpenStack networking.

- L3-Proxy: Similar role like DVR L3-Agent. L3-Proxy makes the cascaded Neutron as the L3 backend of Neutron. Finish L3-networking in the cascaded OpenStack, including cross OpenStack networking.

- FW-Proxy: Similar role like FWaaS-Agent. FW-Proxy makes the cascaded Neutron as the FWaaS backend of Neutron.

- LB-Proxy: Similar role like LBaaS-Agent. LB-Proxy makes the cascaded Neutron as the LBaaS backend of Neutron.

- VPN-Proxy: Similar role like VPNaaS-Agent. VPN-Proxy makes the cascaded Neutron as the VPNaaS backend of Neutron.

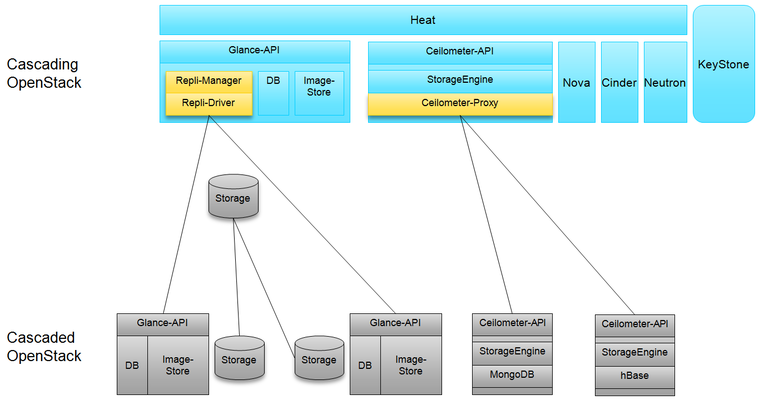

For Glance, it’s not a must to have cascading. It’s up to back-end store and deployment decision. Both global glance or glance with cascading can work for OpenStack cascading solution. There are two ways to do glance cascading, both method will make the cascaded Glance as one of the image locations:

- The first way is to replicate image when image data uploaded or an image location patched or snapshot created, this way can get better end-user experience for shorter VM boot period, but the technologies used is more complex.

- The second way to do the glance cascading is to replicate the image data only when the image is used for the first time in the regarding cascaded OpenStack. This way will make the first time VM-boot take longer time to finish the task, but it’s much more simple and robust.

- The third way is to just register the location in the cascading Glance for the already existing Image in the cascaded Glance

For Ceilometer, cascading solution has to be introduced. There is huge volume data will be generated inside Ceilometer, it’s impossible to use shared Ceilometer for all sites.

- Repli-Manager: Replicate image among the cascading and policy determined Cascaded OpenStacks. It’s only required if using image synchronization when image data upload or an image location patched or snapshot created.

- Ceilometer-Proxy: Transfer the request to destined Ceilometer or collect information from several Ceilometer.

Value to end user

- Tenant has global view for resources in multi-clouds

The tenant’s resources VM, Volume may be distributed in multi-OpenStacks which using shared KeyStone or KeyStone federation, and also these resources are inter-connected through L2/L3 networking with advanced service like FW,LB,VPN. The tenant’s distributed resources can be managed through the cascading OpenStack, like the tenant has one virtual OpenStack allocated to him, the tenant has global view for his resources like image, metering data, VM/volume/network, etc. The tenant also has global quota control and resources utilization through the cascading OpenStack.

- Tenant level global IP address management.

The cascading OpenStack can work as the global IP address management for the tenant across multiple cascaded OpenStack.

- Virtual machine / volume migration / vApp migration from one data center to another:

With the help of OpenStack cascading, VM/Volume migration from one DC to another one is feasible. Ref to blog cross-dc-app-migration-over-openstack-cascading

- High availability application across different physical data center.

With the aid of overlay virtual L2/L3 networking across data centers and image synchronization function, application backup/disaster recovery/load balance is easy to implement in the distributed cloud.

Value to cloud admin

See more detail information in the document linked in the section “Architecture of OpenStack cascading”. The major advantage of the architecture is listed here:

- The cascading OpenStack aggregate many child OpenStack cloud via standard OpenStack API into one cloud, and expose one OpenStack API endpoint by the cascading OpenStack for the tenant.

- if one Cascaded OpenStack failed, other part of the cloud can still work and accessible. This makes one cascaded OpenStack can act as Amazon like Availability Zone. If the cascading OpenStack failed, all cascaded OpenStacks are still manageable and workable via OpenStack API independently. In phase I, the provisioning is not allowed for consistency consideration between cascading and cascaded OpenStack. In phase II, after the consistency issue is solved, the provisioning can be allowed even if the cascading OpenStack is failed.

- The cascading OpenStack manages cascaded OpenStacks via standard OpenStack API. OpenStack api is restful API with backward compatibility and multi-version API in parallel. Therefore multi-vendor/multi-version of OpenStack is feasible in one large scale cloud.

- Each cascaded OpenStack and the cascading OpenStack can be managed independently as standalone OpenStack. Therefore the upgrade or operation and maintenance can be done separately in availability zone granularity.

- Relied on the standard OpenStack API managed by the cascading OpenStack, a new vendor’s physical resources with OpenStack distribution built-in could be integrated into the cloud via plug & play model, just like USB device plugged into PC. This benefit makes OpenStack API as the soft defined “PCI” bus in Cloud era.

- Scalability not only in one cascaded OpenStack, but also for multi-vendors’s cascaded OpenStack and federated OpenStack spread into many data centers. Because OpenStack API is restful API, one cascading OpenStack to manage multiple OpenStack distributed in multiple data centers across WAN or LAN is feasible.

Proof of Concept

The PoC gives us the confidence that OpenStack cascading solution is feasible, please refer to Tricircle PoC in StackForge: https://github.com/stackforge/tricircle/tree/poc, or https://github.com/stackforge/tricircle/tree/stable/fortest

The primary contact for PoC is Chaoyi Huang ( joehuang@huawei.com )

PoC Scalability Test Report

Please refer to: Test report for OpenStack cascading solution to support 1 million VMs in 100 data centers

PoC live demo video

Only live demo video available now, difficult for us to setup an global accessible online lab.

YouTube: https://www.youtube.com/watch?v=OSU6PYRz5qY

Youku (low quality, for those who can’t access YouTube):http://v.youku.com/v_show/id_XNzkzNDQ3MDg4.html

Vimeo: http://vimeo.com/107453159

Relationship with Tricircle

After the PoC of cascading, an OpenStack project called Tricircle ( https://wiki.openstack.org/wiki/Tricircle , https://github.com/openstack/tricircle ) is started, the design will be improved based on the lessons learned, and the project running under OpenStack big-tent guidelines.

Mail-list

Use OpenStack dev list, please include [openstack-dev][tricircle] in the mail title.

Discussion during the PoC

- Blog of Joe Huang: https://www.linkedin.com/today/author/23841540

- Discussion in the OpenStack dev-list: http://openstack.10931.n7.nabble.com/all-tc-Multi-clouds-integration-by-OpenStack-cascading-td54115.html

- Discussion in the OpenStack dev-list: https://www.mail-archive.com/openstack-dev@lists.openstack.org/msg36618.html

- Discussion in the OpenStack dev-list(Neutron scaling): https://www.mail-archive.com/openstack-dev%40lists.openstack.org/msg50128.html

- The speech in Paris summit: Building multi-site and multi-OpenStack cloud with OpenStack cascading (http://www.youtube.com/watch?v=-KOJYvhmxQI)

- more info here [tbd]